Deploy your Application #

Kuzzle is just a Node.js application that also needs Elasticsearch and Redis to run.

The only specifity is that Kuzzle needs to compile C and C++ dependencies so a successful npm install execution needs the python and make packages, as well as C and C++ compilers (in the following examples we'll use g++).

At Kuzzle we like to use Docker and Docker Compose to quickly deploy applications.

In this guide we will see how to deploy a Kuzzle application on a remote server.

Prerequisites:

- A SSH access to a remote server running on Linux

- Docker and Docker Compose installed on this server

- A Kuzzle application

In this guide we will perform a basic deployment of a Kuzzle application.

For production deployments, we strongly recommend to deploy your application in cluster mode to take advantage of high availability and scalability.

Our team can bring its expertise and support for such deployments: get a quote

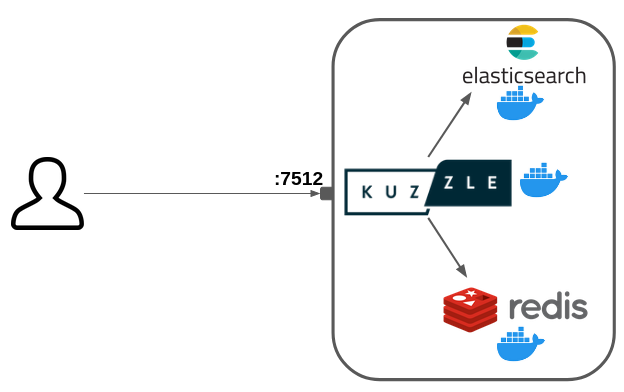

Target architecture #

We will deploy the following services in Docker containers:

- Node.js (Kuzzle)

- Elasticsearch

- Redis

We will use Docker Compose as a basic container orchestrator.

Only Kuzzle will be exposed to the internet on the port 7512.

This deployment does not use any SSL encryption (HTTPS).

A production deployment must include a reverse proxy to securize the connection with SSL.

Authentication Security in Production #

⚠️ Important Security Requirement #

You must set the kuzzle_security__authToken__secret environment variable before deploying Kuzzle to production. This secret is used to sign and verify JSON Web Tokens (JWTs) for user authentication.

Why This Matters #

- Prevents tokens from being stored in Elasticsearch

- Improves overall security

- Gives you direct control over token management

Security Notes #

- Fallback Warning: If you don't set this variable, Kuzzle will use a less secure fallback method (not recommended for production)

- Token Invalidation: Changing the secret value will immediately invalidate all existing authentication tokens

- User Impact: Users will need to log in again if the secret changes

Additional Resources #

For other configuration options, see the sample configuration file.

Prepare our Docker Compose deployment #

We are going to write a docker-compose.yml file that describes our services.

First, create a deployment/ directory: mkdir deployment/

Then create the deployment/docker-compose.yml file and paste the following content:

---

version: "3"

services:

kuzzle:

build:

context: ../

dockerfile: deployment/kuzzle.dockerfile

command: node /var/app/app.js

restart: always

container_name: kuzzle

ports:

- "7512:7512"

- "1883:1883"

depends_on:

- redis

- elasticsearch

environment:

- kuzzle_services__storageEngine__client__node=http://elasticsearch:9200

- kuzzle_services__internalCache__node__host=redis

- kuzzle_services__memoryStorage__node__host=redis

- NODE_ENV=production

redis:

image: redis:8

command: redis-server --appendonly yes

restart: always

volumes:

- redis-data:/data

elasticsearch:

image: kuzzleio/elasticsearch:7

restart: always

ulimits:

nofile: 65536

volumes:

- es-data:/usr/share/elasticsearch/data

volumes:

es-data:

driver: local

redis-data:

driver: localThis configuration allows to run a Kuzzle application with Node.js alongside Elasticsearch and Redis.

Kuzzle needs compiled dependencies for the cluster and the realtime engine.

We are going to use a multi-stage Dockerfile to build the dependencies and then use the node:12-stretch-slim image to run the application.

Create the deployment/kuzzle.dockerfile file with the following content:

# builder image

FROM node:18 as builder

RUN set -x \

&& apt-get update && apt-get install -y \

curl \

python \

make \

g++ \

libzmq3-dev

ADD . /var/app

WORKDIR /var/app

RUN npm ci && npm run build && npm prune --production

# run image

FROM node:18

COPY --from=builder /var/app /var/appDeploy on your remote server #

Now we are going to use scp (SSH copy) to copy our application on the remote server.

$ scp -r ../playground <user>@<server-ip>:.Then simply connect to your server and run your application with Docker Compose:

$ ssh <user>@<server-ip>

[...]

$ docker compose -f deployment/docker-compose.yml up -dYour Kuzzle application is now up and running on port 7512!